Ego-Foresight

Self-supervised Learning of Agent-Aware Representations for Improved RL

Abstract

Despite the significant advances in Deep Reinforcement Learning (RL) observed in the last decade, the amount of training experience necessary to learn effective policies remains one of the primary concerns in both simulated and real environments. Looking to solve this issue, previous work has shown that improved efficiency can be achieved by separately modeling the agent and environment, but usually requires a supervisory signal. In contrast to RL, humans can perfect a new skill from a small number of trials and often do so without a supervisory signal, making neuroscientific studies of human development a valuable source of inspiration for RL. In particular, we explore the idea of motor prediction, which states that humans develop an internal model of themselves and of the consequences that their motor commands have on the immediate sensory inputs. Our insight is that the movement of the agent provides a cue that allows the duality between the agent and environment to be learned. To instantiate this idea, we present Ego-Foresight (EF), a self-supervised method for disentangling agent information based on motion and prediction. Our main finding is that, when used as an auxiliary task in feature learning, self-supervised agent-awareness improves the sample-efficiency and performance of the underlying RL algorithm. To test our approach, we study the ability of EF to predict agent movement and disentangle agent information. Then, we integrate EF with model-free and model-based RL algorithms to solve simulated control tasks, showing improved sample-efficiency and performance.

Motivation

In robotic tasks, we know that there exists an embodiment, motivating the learning of feature representations that disentangle agent and environment.

Previous work has demonstrated the effectiveness of this strategy in improving efficiency and performance but has relied on the availability of supervisory masks and thus cannot adapt to changes in the body-schema, for example when the robot needs to use tools.

In natural organisms, studies have shown that the receptive fields of neurons responding to hand stimuli expand when using tools, indicating that the representation of self is adaptable.

This leads us to ask: can we disentangle agent & environment without supervision & allow body adaptability while retaining improvements in sample-efficiency?

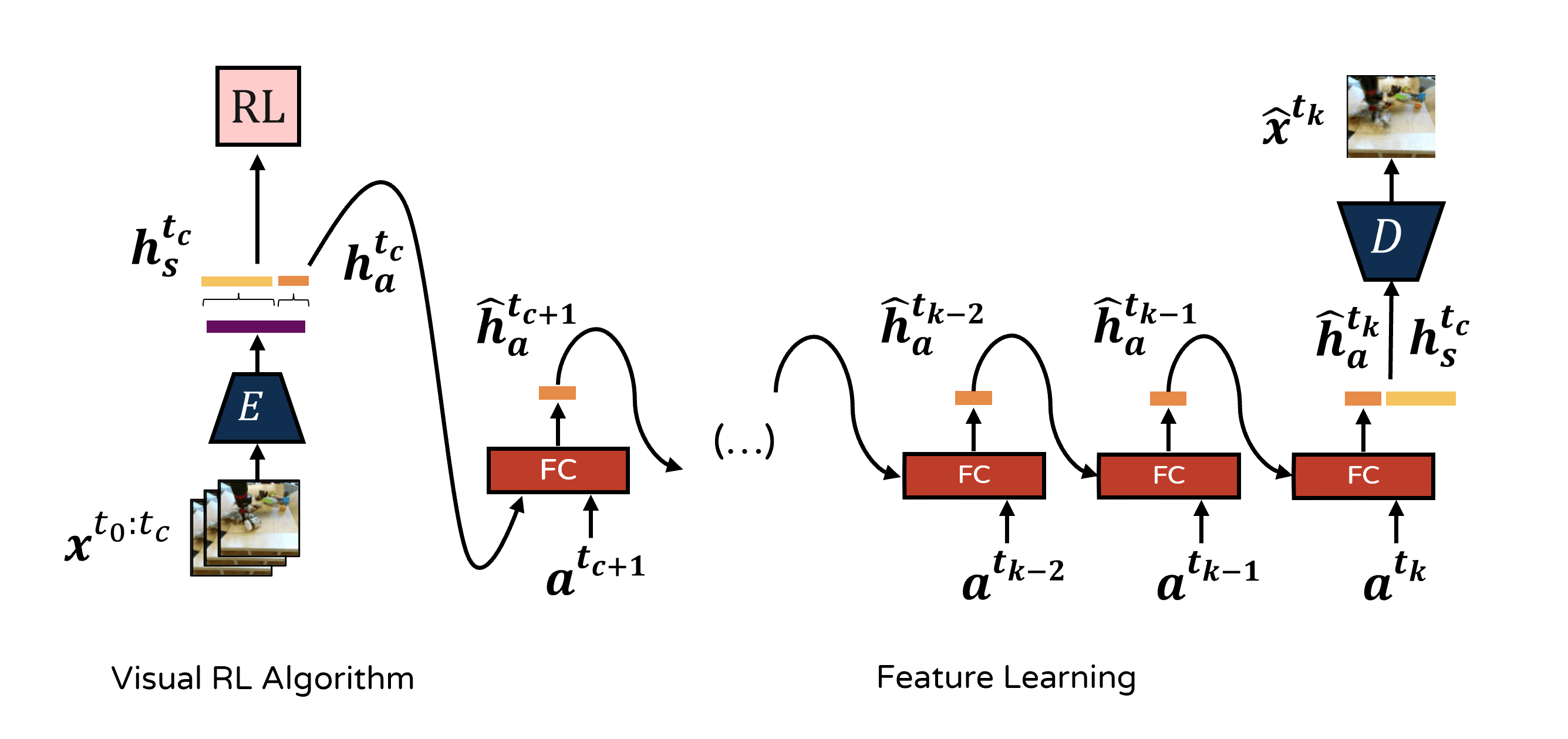

Approach

We start from the premise that regions completely contingent on the agent’s actions can be viewed as part of the agent.

We hipothesize that a forward model with a bottleneck in the feature representation should learn to extract agent features, which can be accurately predicted.

Our architecture uses a recurrent model to incentivize the concentration of agent features in $\mathbf{h}_a$$.

We optimize the reconstruction of the future frames as an additional loss term and train in a self-supervised manner using sequences from a replay buffer.

Our method can be used as an extension to existing off-policy RL algorithms.

In this work we apply EF to DrQ-v2 and TD-MPC2.

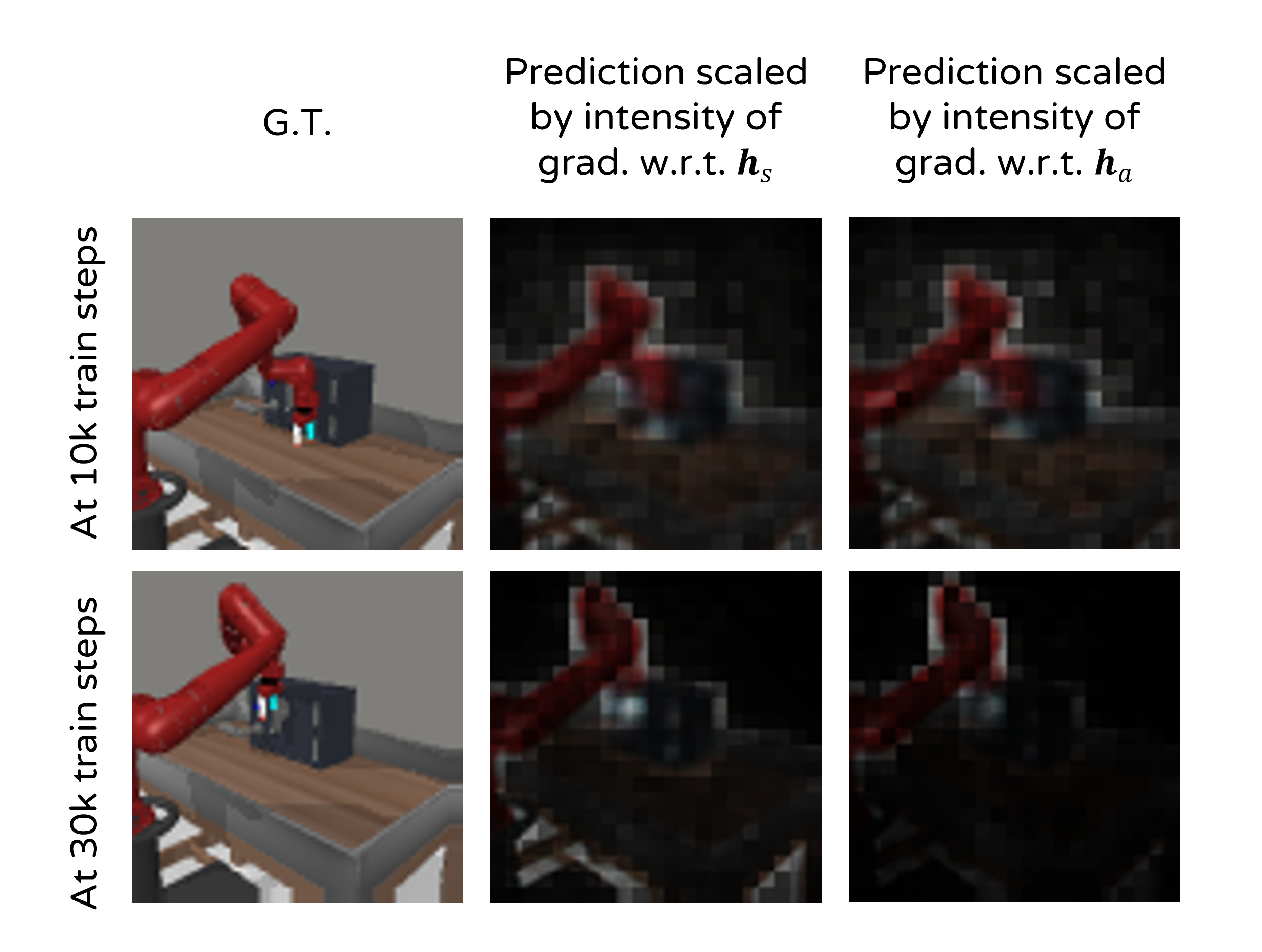

Visualizing the learned features

As training progresses, $\mathbf{h}_a$ specializes in extracting agent features while $\boldsymbol{h}_s$ keeps extracting information of thw whole scene.

Static information can be overfitted by the decoder and thus is not extracted by either feature vector.

Once the agent starts using the tool, this is integrated into the agent representation.

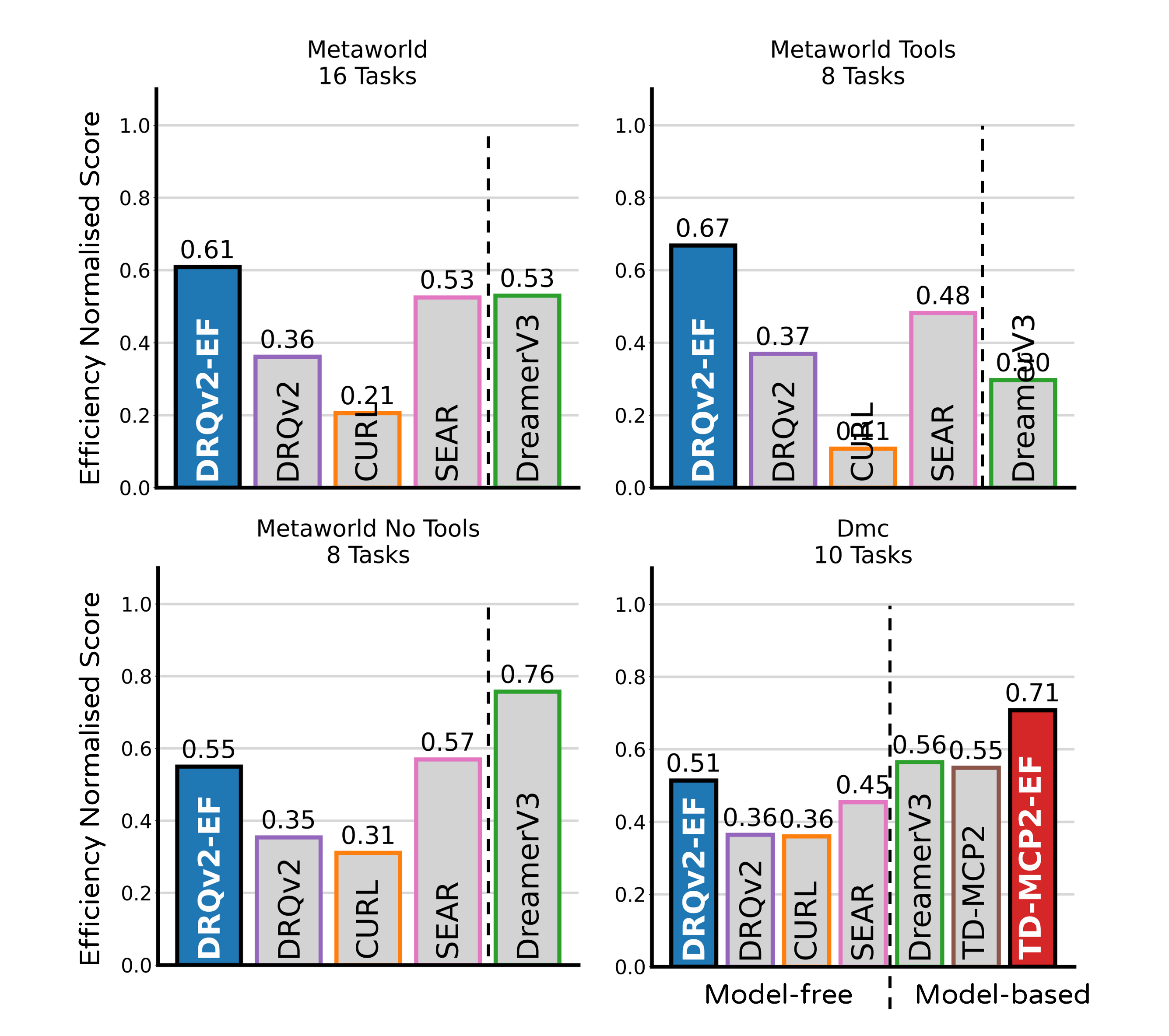

Results